First Seen

2023-05-24T22:39:04+00:00

simple-description (llama3.2-vision_11b)

The meme is a reaction to a news article about the self-driving car company, Cruise, which was forced to shut down its operations due to a series of accidents. The meme is a humorous take on the situation, using the phrase "anti-billionaire" to imply that the company's downfall is a result of its own arrogance and overconfidence. The text in the image reads: "I'm not a billionaire, I'm just a guy with a self-driving car company that's about to shut down because of a series of accidents. I'm not a billionaire, I'm just a guy who's about to lose everything because I was too arrogant to listen to my engineers."

detailed-analysis (gemma3-27b-vision)

Okay, here's an analysis of the provided Twitter post, broken down into sections as you requested. It's worth noting that a single tweet can only be stretched so far into these complex theoretical frameworks, so some sections are more developed than others.

Visual Description

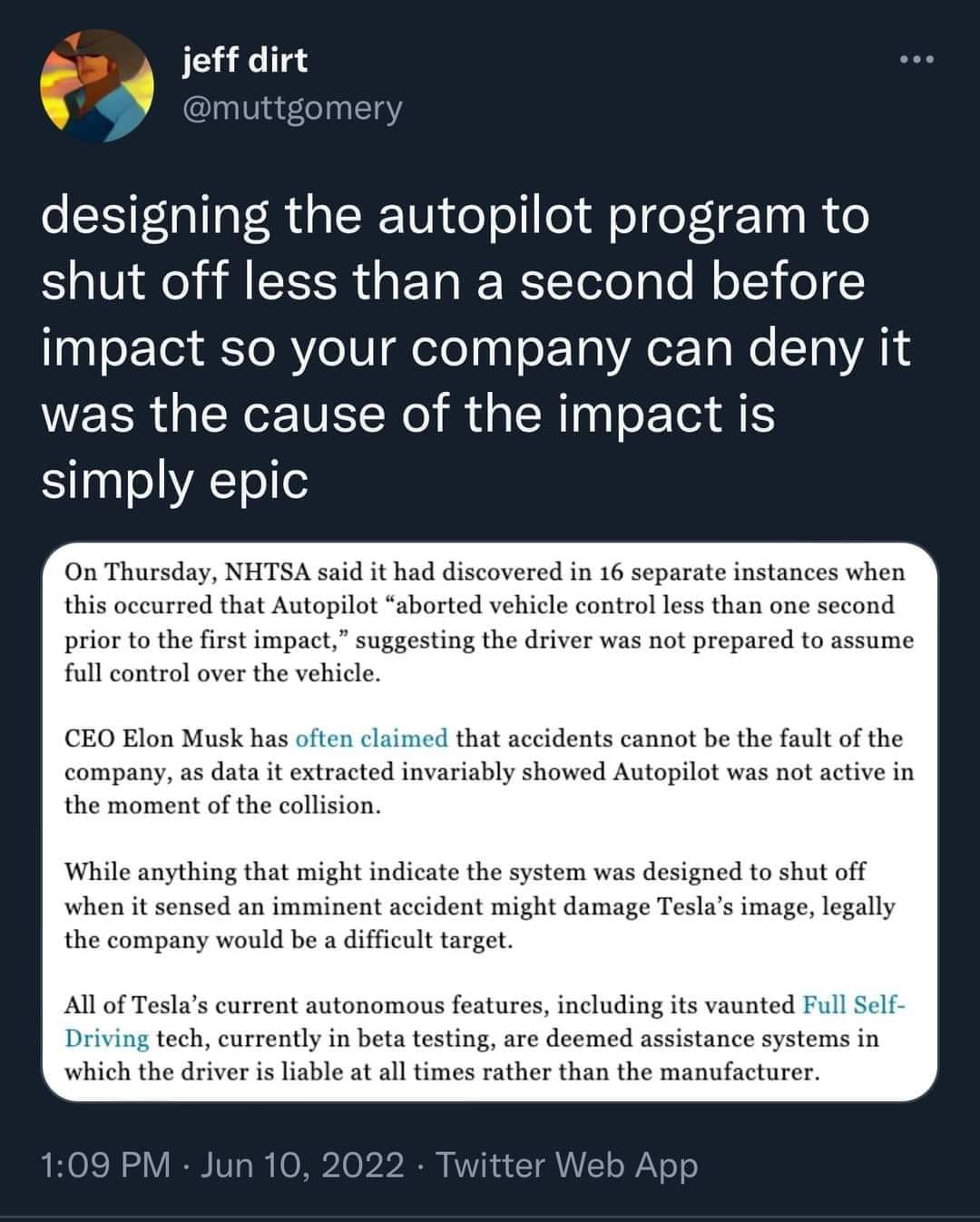

The image is a screenshot of a Twitter post from the account "@muttgomery" (labeled "jeff dirt"). The background is the standard Twitter blue and white. The post consists of text, which is the focus of the analysis. The post contains a headline that says “designing the autopilot shut off… epic”, followed by a text explaining how Tesla’s autopilot system is designed to disengage just before an accident. The timestamp reads "1:09 PM - Jun 10, 2022", and it's noted that it was posted via the "Twitter Web App."

Foucauldian Genealogical Discourse Analysis

This tweet touches upon a core Foucauldian concern: the construction of truth and responsibility through discourse and power relations.

- Discourse: The tweet exposes the discourse surrounding Tesla's Autopilot system. Tesla actively constructs a narrative where accidents are not the fault of the technology, but rather the driver. The language employed—framing the shutdown as a "design feature" rather than a potential safety flaw—is crucial. The claim of "epic" is sarcasm aimed at the deceptive narrative the company is trying to uphold.

- Power/Knowledge: The NHTSA report (mentioned in the tweet) represents a challenge to Tesla's constructed truth. The agency is attempting to exert power through knowledge – by uncovering the 16 instances of autopilot disengaging just before collisions. Tesla’s power is to control the narrative by controlling how accidents are represented.

- Genealogy: A genealogical investigation might trace the historical development of driver assistance technologies, how liability was assigned to drivers, and how Tesla strategically repositioned that discourse. This shifts the focus from technology that assists drivers to technology that is not at fault in accidents, effectively transferring the burden of responsibility.

Critical Theory

The tweet is a potent example of how technology is not neutral, but rather imbued with ideological values.

- Instrumental Reason: The design of the Autopilot system, as described, is presented as an example of instrumental reason pushed to its extreme. The goal is not simply to create a safe autonomous driving system, but to engineer a liability shield for the company. The function is designed to absolve the manufacturer of blame, even if it compromises safety.

- Domination: The practice potentially exercises domination over the driver. By designing a system that disengages just before an accident, Tesla subtly shifts responsibility and risks onto the driver—making them the fall guy. The company can avoid accountability while potentially downplaying the flaws of the technology.

- Commodification of safety: The tweet hints at a trend of commodification of safety. Rather than genuinely improving safety with a better autopilot, Tesla appears to be focusing on the commodification of the appearance of safety—through effective PR and legal maneuvering.

Marxist Conflict Theory

From a Marxist perspective, the situation embodies a class conflict between the corporation (Tesla) and the drivers/consumers.

- Capital Accumulation: Tesla's focus on avoiding liability is fundamentally about protecting capital. By shifting blame to the driver, the company avoids costly lawsuits, maintains its brand image, and continues to accumulate capital.

- Exploitation: The drivers are potentially being exploited in this scenario. They are placed at risk (potentially through a flawed system) and made responsible for accidents that may not be their fault.

- Ideology: The narrative Tesla promotes – that accidents are the driver's fault – is an ideological construct designed to obscure the underlying power dynamics and protect the company's interests.

Postmodernism

The tweet exemplifies several postmodern themes:

- Deconstruction of Truth: The tweet exposes the constructed nature of “truth” surrounding accidents. There's no objective “fault” – it's a narrative that's actively built and manipulated. Tesla aims to deconstruct any claim of fault on their end.

- Simulacra and Simulation: The tweet suggests a situation where the "real" (safety) is replaced by a simulacrum – the appearance of safety achieved through strategic design and narrative control. Tesla is more interested in creating the impression of safety than actually achieving it.

- Skepticism: The tweet promotes a healthy skepticism toward grand narratives of technological progress. It suggests that even seemingly innovative technologies can be driven by purely economic and legal considerations.

Queer Feminist Intersectional Analysis

This section is the most difficult to apply directly, as the tweet doesn't explicitly address gender, sexuality, or intersectionality. However, we can consider:

- Power imbalances: The scenario reveals a power imbalance between the corporation and the consumer, which can be amplified for marginalized groups. Those with less access to legal resources or social capital may be more vulnerable to unfair blame in an accident.

- Safety and vulnerability: The design flaw potentially creates a situation where certain demographics (e.g., less experienced drivers, drivers from marginalized communities who may face bias in legal proceedings) are disproportionately vulnerable to harm.

- Critique of "neutral" technology: The tweet challenges the notion of "neutral" technology. Even seemingly technical designs have social consequences, and those consequences may be unevenly distributed.

Hopefully, this detailed breakdown helps you analyze the tweet from multiple theoretical perspectives. It's important to remember that these frameworks are tools for analysis, and their application will always be subject to interpretation.

simple-description (llama3.2-vision)

The meme is a screenshot of a Twitter post that criticizes a company's decision to use an automated driver-

tesseract-ocr

jeff dirt aes Cleese n-8y designing the autopilot program to shut off less than a second before impact so your company can deny it was the cause of the impact is simply epic On Thursday, NHTSA said it had discovered in 16 separate instances when this occurred that Autopilot “aborted vehicle control less than one second prior to the first impact,” suggesting the driver was not prepared to assume full control over the vehicle. CEO Elon Musk has often claimed that accidents cannot be the fault of the company, as data it extracted invariably showed Autopilot was not active in the moment of the collision. While anything that might indicate the system was designed to shut off when it sensed an imminent accident might damage Tesla’s image, legally the company would be a difficult target. All of Tesla’s current autonomous features, including its vaunted Full Self- Driving tech, currently in beta testing, are deemed assistance systems in which the driver is liable at all times rather than the manufacturer. 1:09 PM - Jun 10, 2022 - Twitter Web App