First Seen

2023-12-18T06:50:45+00:00

simple-description (llama3.2-vision_11b)

The meme is a graph showing the growth of various types of data in the field of Artificial Intelligence, with a humorous twist. The x-axis represents the year, and the y-axis represents the number of data points. The meme pokes fun at the idea that AI has become so complex that it's hard to keep track of all the data, with the caption "AI is getting so complex I'm starting to forget what I'm working on".

detailed-analysis (gemma3-27b-vision)

Okay, let's break down this graph of machine learning parameter counts over time through several theoretical lenses.

Visual Description

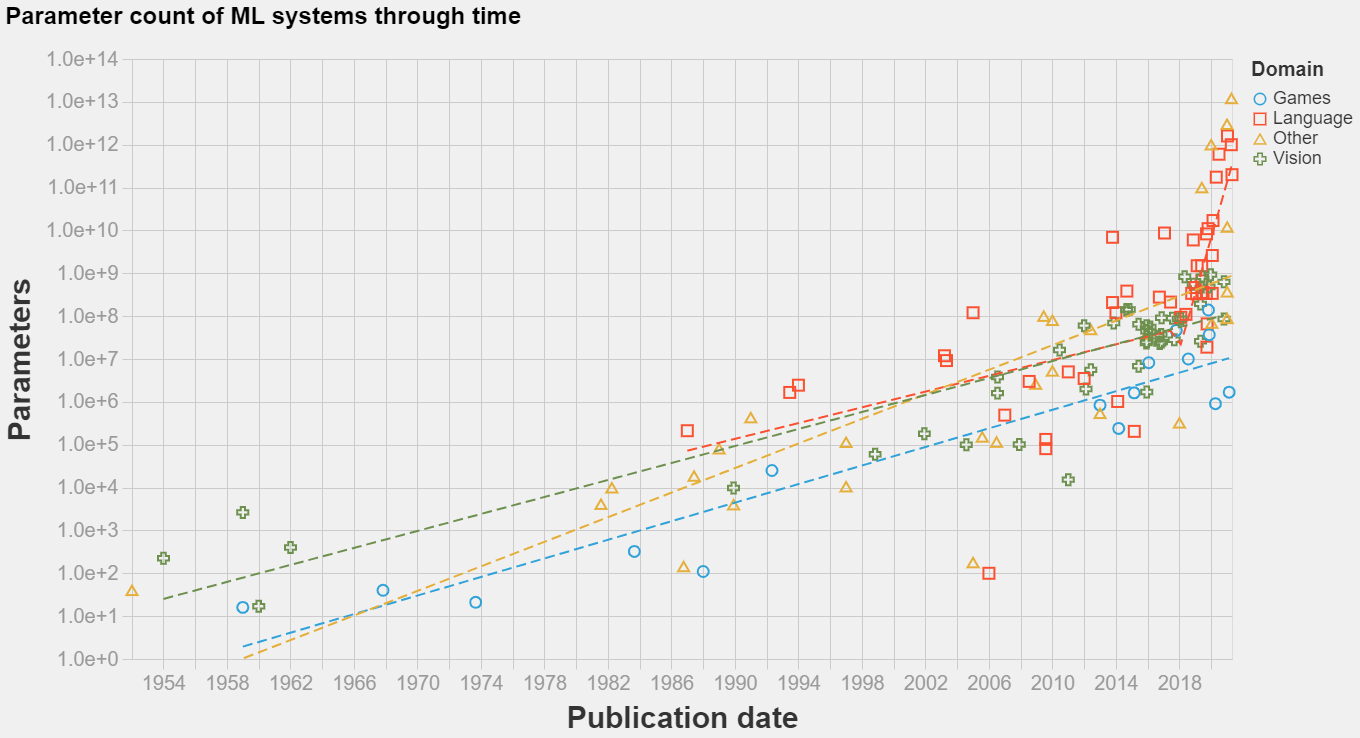

The graph is a scatter plot illustrating the trend of machine learning (ML) model parameter counts against the year of publication. The Y-axis is logarithmic, representing the number of parameters (ranging from 10⁰ to 10¹⁴), while the X-axis represents the publication year from 1954 to 2022. The plot is color-coded according to the domain of the machine learning task: Games (red triangles), Language (orange squares), Vision (green plus signs), and Other (blue circles).

A strong upward trend is evident, especially after 2010. Early data points (pre-2000) are sparse and show relatively low parameter counts. After 2010, the parameter counts increase exponentially. Language models (orange squares) appear to have the most dramatic increase, quickly surpassing the other domains in parameter size. Vision and Games generally have similar growth trends, while “Other” has a slower growth. The logarithmic scale obscures the rate of growth, but the rapid increase in the most recent years is still clearly visible.

Foucauldian Genealogical Discourse Analysis

This graph can be analyzed through a Foucauldian lens as a visualization of the discursive formations surrounding "intelligence" and "learning." Foucault focused on how knowledge is constructed through discourse and power relations.

- The Discourse of Scale: The graph documents the emergence and normalization of a "discourse of scale" in machine learning. Initially, ML models were built with relatively modest parameter counts. Over time, the discourse shifted toward an assumption that more parameters equal better performance. This is not necessarily a technologically determined truth but a historically contingent construction. The logarithmic scale ironically hides the accelerating drive to escalate parameters, representing a normalization of increasing resource usage.

- Power/Knowledge: This escalation of parameters isn't just a technical decision. It’s linked to power relations. The ability to train enormous models (requiring massive computational resources) becomes a source of power. Organizations (and nations) that can afford to build and deploy these models gain a significant advantage. Knowledge is produced through this power dynamic – what constitutes "good" ML is increasingly defined by the possibility of achieving results with these large models.

- Genealogical Trace: A genealogical analysis could trace the historical "pedigree" of this trend. What early assumptions and practices led to the emphasis on scaling? What contingencies and breaks shaped this development? For example, the availability of increasing compute power (Moore's Law) is a key material condition, but it doesn’t determine the drive toward larger models. The idea that larger models would inherently "understand" or "generalize" better is a discursive move that legitimized the scaling trend.

Critical Theory

From a Critical Theory perspective (drawing on the Frankfurt School, for example), the graph can be seen as a symptom of a broader instrumental rationality.

- Technological Rationality: The relentless increase in parameters reflects a technologically rational approach to problem-solving. The assumption is that complexity can be conquered through increasing computational power and scale. This prioritization of technical efficiency obscures the social, ethical, and political implications of these systems.

- Commodification and Control: The escalating parameter counts, and the compute power they demand, contribute to the commodification of intelligence. Intelligence is reduced to a calculable quantity that can be bought and sold. This also enhances the potential for social control. More powerful models can be used for surveillance, manipulation, and other forms of social engineering.

- Loss of Meaning: The drive for scale can also be seen as a symptom of a loss of meaning and purpose. The focus on quantitative metrics (number of parameters) obscures the qualitative dimensions of intelligence and understanding. What is the purpose of these ever-larger models? Who benefits? These questions are often sidelined.

Marxist Conflict Theory

Through a Marxist lens, the graph highlights the intensifying contradictions within the capitalist mode of production.

- Capital Accumulation: The escalating parameter counts represent a form of capital accumulation. The development and training of these models require massive investment in hardware, software, and personnel. This investment generates profits for companies that control these resources.

- Concentration of Power: The ability to train and deploy these models is concentrated in the hands of a few large corporations (e.g., Google, Microsoft, Meta). This leads to a further concentration of economic and political power.

- Exploitation of Labor and Resources: The computational infrastructure required for these models has a significant environmental footprint. The extraction of raw materials, the energy consumption of data centers, and the generation of e-waste all contribute to environmental degradation and the exploitation of labor. The increasing demand for GPUs, for example, has led to supply chain issues and inflated prices.

- Class Struggle: The benefits of these advanced models are not evenly distributed. They primarily accrue to those who own and control the technology, while the costs (environmental damage, job displacement) are often borne by marginalized communities.

Postmodernism

A postmodern reading would deconstruct the very notion of progress implied by the upward trend.

- Simulation and Hyperreality: The graph could be seen as evidence of a shift toward simulation and hyperreality. The models aren't necessarily representing "real" intelligence or understanding; they are creating sophisticated simulations of intelligence. The scale simply enhances the realism of the simulation.

- The Death of Grand Narratives: The graph challenges the idea of a linear progression towards "artificial general intelligence." The relentless pursuit of scale is just one possible path, and it may not lead to the promised land of truly intelligent machines.

- Deconstruction of Meaning: The increasing complexity of these models obscures their underlying logic and makes it increasingly difficult to understand how they work. Meaning is lost in the sea of parameters.

- Emphasis on Difference and Fragmentation: The color-coding of the graph highlights the diversity of applications of ML. There is no single, unifying goal; rather, there is a proliferation of specialized models tailored to specific tasks.

Queer Feminist Intersectional Analysis

This is perhaps the most challenging lens to apply directly, but important connections can be made:

- Data Bias and Representation: The graph, while representing sheer scale, obscures the underlying data these models are trained on. Historically, machine learning datasets have been overwhelmingly biased towards dominant groups (white, male, Western). The increase in parameters does not necessarily address these biases; in fact, it can amplify them. Larger models trained on biased data can perpetuate and exacerbate existing inequalities.

- Access and Exclusion: The resource requirements for training these large models create a barrier to entry for researchers and developers from marginalized communities. This perpetuates a system of exclusion.

- Algorithmic Harm: Biased algorithms can have disproportionately harmful effects on marginalized groups (e.g., in areas like criminal justice, healthcare, and employment). The increasing scale and complexity of these models make it more difficult to identify and mitigate these harms.

- Challenging Technological Determinism: A queer feminist perspective would challenge the notion that technology is neutral or objective. It would emphasize that technology is always shaped by social, cultural, and political forces, and that it can be used to reinforce or challenge existing power structures. The assumption that more parameters automatically lead to better outcomes is itself a normative claim that needs to be interrogated.

Let me know if you'd like any of these theoretical analyses expanded further, or if you'd like me to explore other theoretical lenses!

tesseract-ocr

Parameter count of ML systems through time 1.0e+14 Domain 1.0e+13 A © Games 1 Language 4.0e+12 af A Other & Vision 1.0e+11 nF 1.0e+10 oo ae 1.0e+9 n o — oe £ 1.0e+8 ro Age = 1.0047 a eiiog © Oe oO io ca E 1.0e+6 rR wf ee a <= gH 6° eA ssese- ean | On| 4 O 10645 or sz4ece A get eho H a ----| aL-r7 0 |_cle-P 1.0e+4 totes J Beg sata a Fs ab-To At La 1.0e+3 4 coer tee -e-T -te-T7] 9 1.06+2 * afeqee dest Ao An 1.0e+1 c Om jaet q 4.0240 2F= 1954 1958 1962 1966 1970 1974 1978 1982 1986 1990 1994 1998 2002 2006 2010 2014 2018 Publication date

simple-description (llama3.2-vision)

This meme is a chart that shows the growth of data in various fields, such as games, language, and other categories, over time. The chart has a logarithmic scale on the y-axis, indicating that the data is growing exponentially. The text at the top of the chart reads "The Data is Growing Exponentially" and the text at the bottom reads "When you are no longer the largest".