First Seen

2023-08-31T18:49:05+00:00

simple-description (llama3.2-vision_11b)

The meme is an image of a simple flowchart with two paths: "I want to learn AI" and "I want to make AI". The flowchart shows that the first path leads to "I want to learn AI" and the second path leads to "I want to make AI". The text at the top of the image reads "If you want to learn AI, you have to make AI".

detailed-analysis (gemma3-27b-vision)

Okay, here’s an analysis of the Transformer model architecture diagram, broken down into sections as requested. It's important to note that applying these theoretical frameworks to a technical diagram is interpretive – they're about finding resonances and drawing analogies, not about proving anything literally. Some sections will be quite brief if the framework doesn't offer much direct applicability.

Visual Description

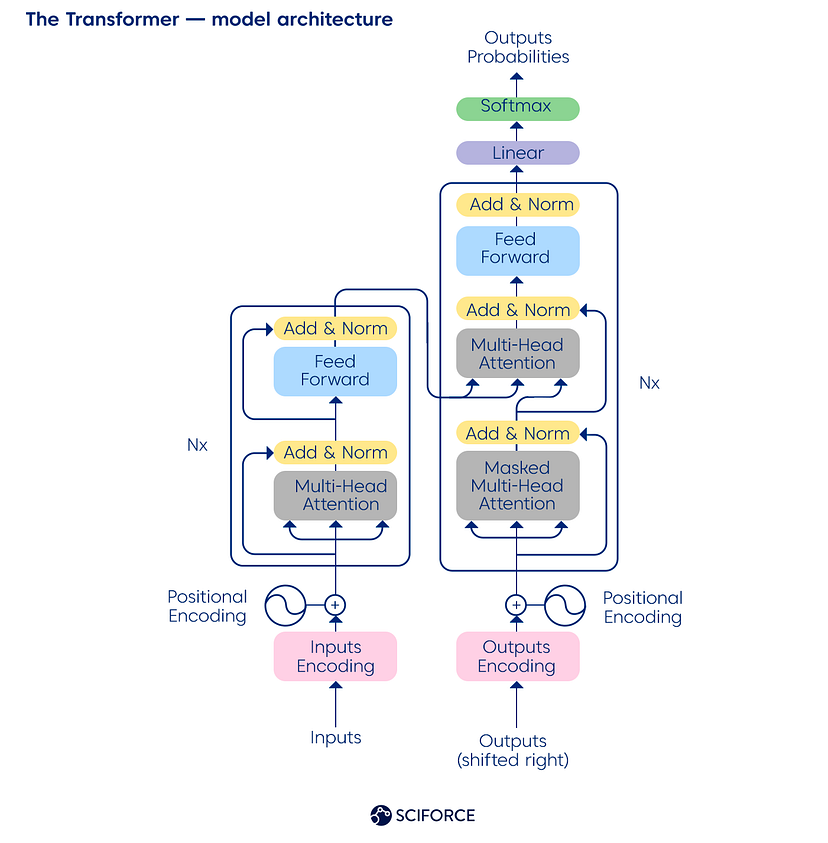

The diagram illustrates the architecture of a Transformer model, a deep learning model commonly used in natural language processing. It’s presented as a stacked, symmetrical block diagram, with data flowing from bottom to top.

Key elements include:

- Input & Output Layers: The bottom section shows the initial "Inputs" being processed and the subsequent "Outputs (shifted right)" being fed into the next layer.

- Positional Encoding: Circular additions of “Positional Encoding” are seen at the base of each block, modifying the input vectors to retain information about the order of elements in the sequence.

- Stacked Blocks: The core of the diagram consists of several identical blocks stacked on top of each other. Each block contains:

- Multi-Head Attention: This is a central component, processing information in parallel via multiple “attention heads”.

- “Add & Norm” Layers: These layers perform residual connections (adding the input to the output) and normalization, improving training stability and information flow.

- Feed Forward: A standard feed-forward neural network layer.

- Output Layers: The top layers include a “Linear” layer, a “Softmax” layer to produce probabilities, and the final “Outputs”.

- Nx: Indicates that the blocks are stacked multiple times (N repetitions, X layers).

The diagram is visually clear, with distinct blocks and arrows showing the data flow. The color-coding helps to differentiate the different layers. The overall impression is of a complex yet organized system, designed for sequential data processing.

Foucauldian Genealogical Discourse Analysis

This framework emphasizes the relationship between power, knowledge, and discourse. The Transformer, as a technology, isn't inherently powerful itself, but it participates in a discourse around intelligence, language, and meaning.

- Episteme: The Transformer model represents a shift in the "episteme" (the underlying framework of knowledge) within the field of AI. It moved away from Recurrent Neural Networks (RNNs) which were sequential in nature, toward parallel processing and attention mechanisms. This shift reflects a new way of understanding how language and information are processed.

- Disciplinary Power: The widespread adoption of Transformer models has created a certain “disciplinary power” within the NLP field. Researchers and engineers are often required to be familiar with these models, effectively creating a standard against which other approaches are measured. It influences research agendas, funding priorities, and career paths.

- Normalization: The “Add & Norm” layers within the model can be read as a metaphor for the ways in which discourse itself normalizes and regulates meaning. By standardizing data and controlling the flow of information, these layers contribute to the creation of a predictable and controlled system.

- Genealogy: Tracing the "genealogy" of the Transformer model reveals how it emerged from earlier work in attention mechanisms, sequence-to-sequence learning, and neural networks. This historical analysis reveals how seemingly "new" technologies are always built upon existing foundations.

Critical Theory

This section examines the potential for domination or oppression embedded within the model and its use.

- Technological Rationality: The Transformer model embodies a form of “technological rationality,” prioritizing efficiency and optimization above all else. This can lead to a reductionist view of language and meaning, treating them as mere data points to be processed.

- Commodification of Language: Transformer models are often used for commercial purposes, such as machine translation, chatbots, and content generation. This commodification of language can reinforce existing power structures and prioritize profit over meaning.

- Bias Amplification: Transformer models are trained on massive datasets, which often reflect societal biases. The model can then amplify these biases, leading to discriminatory or unfair outcomes. For instance, a translation model trained on biased data might reinforce gender stereotypes.

- The “Black Box” Problem: The complexity of Transformer models makes it difficult to understand how they arrive at their outputs. This "black box" nature can raise concerns about accountability and transparency, particularly in high-stakes applications.

Marxist Conflict Theory

This section looks at how the Transformer model might reinforce existing class structures.

- Control of the Means of Computation: The development and deployment of Transformer models require significant computational resources, which are concentrated in the hands of a few powerful corporations. This creates a “digital divide” between those who have access to these resources and those who do not.

- Labor Exploitation: The training of Transformer models requires vast amounts of labeled data, which is often collected through crowdsourcing platforms where workers are paid low wages. This represents a form of “digital labor exploitation.”

- Concentration of Power: The ability to control and manipulate language through Transformer models gives those who own and control the technology significant power over information and public opinion. This power can be used to reinforce existing class structures and suppress dissent.

- Commodification of Knowledge: The model’s ability to generate human-quality text and code commodifies knowledge and creativity, potentially devaluing human skills and expertise.

Postmodernism

Postmodern thought challenges grand narratives, emphasizes subjectivity, and rejects the idea of objective truth.

- Deconstruction of Meaning: Transformer models, by breaking down language into numerical representations, can be seen as "deconstructing" meaning. This process undermines the idea that language has a fixed or inherent meaning.

- Simulation and Hyperreality: The ability of Transformer models to generate convincing text and images blurs the lines between reality and simulation. This can lead to a “hyperreality” where it becomes difficult to distinguish between what is real and what is artificial.

- Intertextuality: The Transformer’s capacity to generate novel text based on vast training datasets highlights the concept of "intertextuality," the idea that all texts are built upon and respond to other texts.

- Rejection of Universal Truths: Postmodernism rejects the notion of universal truths or objective knowledge. The Transformer model’s dependence on data and its capacity for subjective outputs align with this rejection.

Queer Feminist Intersectional Analysis

This analyzes how the Transformer impacts gender, sexuality, race, and other intersecting identities.

- Data Bias and Representation: Transformer models are notoriously susceptible to biases present in their training data. This can lead to the perpetuation of harmful stereotypes related to gender, race, and sexuality. For example, a model might generate text that associates certain professions with specific genders or reinforces racist tropes.

- Marginalization of Non-Normative Identities: The datasets used to train these models often lack sufficient representation of marginalized identities, leading to outputs that are insensitive or exclusionary.

- Algorithmic Oppression: Biased algorithms can reinforce existing systems of oppression, particularly for marginalized groups. For instance, a chatbot trained on biased data might provide discriminatory responses to users from certain backgrounds.

- Power Dynamics in Data Collection: The process of data collection itself can be fraught with power imbalances. Marginalized communities may be disproportionately subjected to data extraction without their consent or benefit.

- Reclaiming the Narrative: Queer and feminist scholars are exploring ways to use Transformer models for positive social change, such as creating inclusive chatbots or generating counter-narratives to challenge dominant ideologies.

Important Disclaimer: These are interpretive readings, and there are many other ways to analyze this diagram. The goal is to demonstrate how different theoretical frameworks can be applied to a technical artifact to reveal its hidden assumptions, power dynamics, and social implications.

tesseract-ocr

The Transformer — model architecture Outputs Probabilities Linear Feed Forward Feed Forward, e 3 Nx Nx = Positional A) A) Positional Encoding Y @ @ CY Encoding Inputs. Outputs Encoding Encoding Inputs Outputs (shifted right) 4B SCIFORCE

simple-description (llama3.2-vision)

The meme is an image of a diagram showing the architecture of a transformer model, a type of artificial neural network. The diagram is labeled "The Transformer Model Architecture" and includes several components such as "Encoder", "Decoder", "Attention", and "Output". The text "Don't look at me like that" is written above the diagram, implying that the person is being told not to look at them in a certain way.