First Seen

2024-04-21T22:20:15.336827+00:00

simple-description (llama3.2-vision_11b)

The meme is a humorous image comparing the performance of two AI models, Meta AI's LLaMA 2 and Google's PaLM 2, in various tasks. The image shows a series of graphs comparing the two models' performance in areas such as language understanding, reasoning, and code generation, with LLaMA 2 consistently outperforming PaLM 2 in most categories.

detailed-analysis (gemma3-27b-vision)

Okay, here's an analysis of the provided image, broken down into sections as requested. I will only include sections where the topic seems relevant to the image's content. Given the nature of the image—graphs of model performance—the analysis will lean heavily toward critical and potentially postmodern interpretations, as the data represents something rather than being something directly.

Visual Description

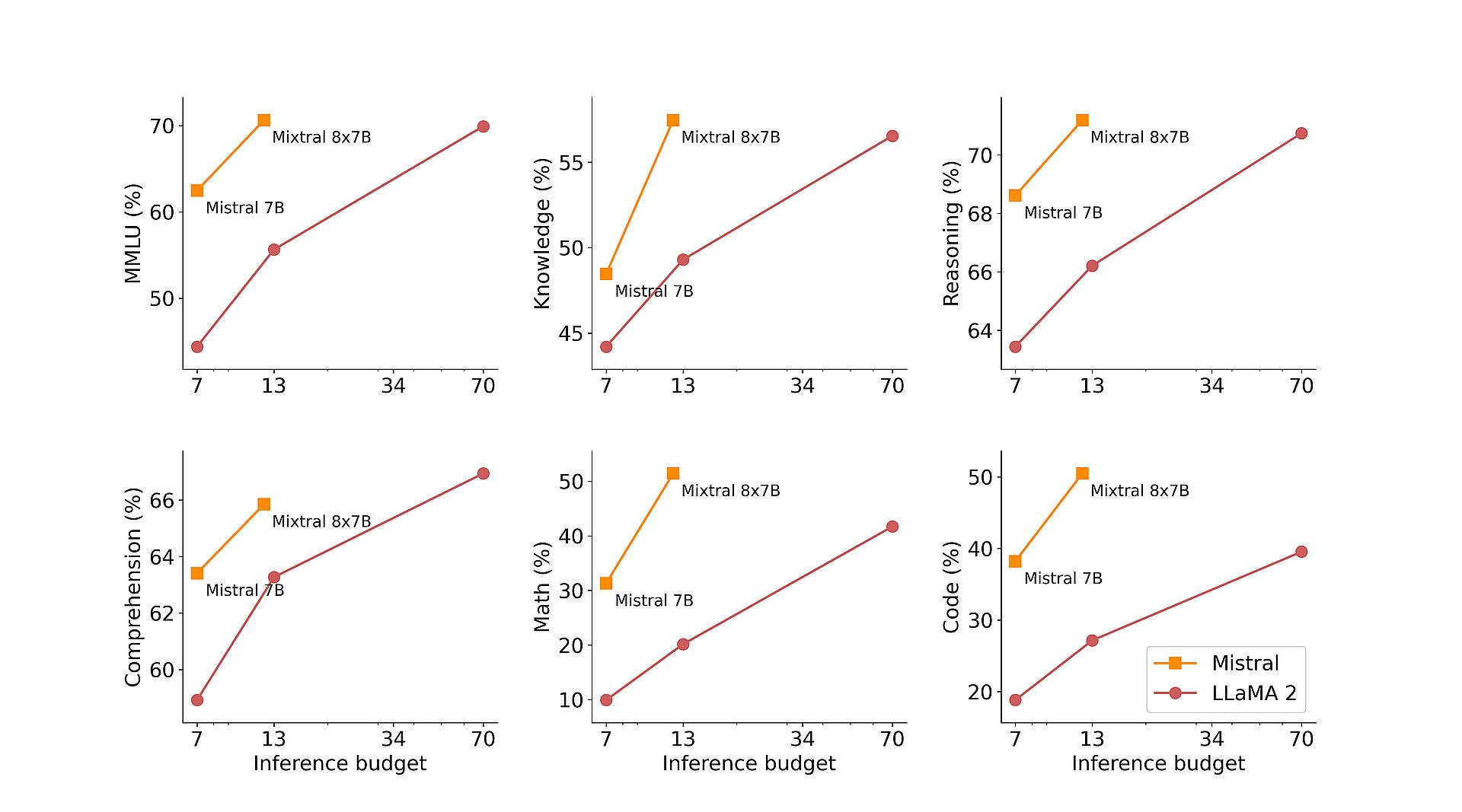

The image presents six line graphs, each illustrating the performance of two language models ("Mistral 7B" and "Mixtral 8x7B") across six different evaluation categories: MMLU, Knowledge, Reasoning, Comprehension, Math, and Code. The x-axis of each graph represents the "Inference Budget" – specifically, computational resources allocated during model evaluation – at four values: 7, 13, 34, and 70. The y-axis of each graph represents the percentage score attained by each model in the respective evaluation category.

Visually, Mixtral 8x7B consistently outperforms Mistral 7B across all categories. The performance gap widens as the inference budget increases. The graphs depict a clear positive correlation between inference budget and performance for both models, but the slope of the line for Mixtral 8x7B is consistently steeper, indicating a greater benefit from increased resources. The color scheme is simple: red for Mistral 7B and green for Mixtral 8x7B.

Critical Theory

The image presents a compelling example of how technical metrics (like percentages on benchmarks) construct our understanding of "intelligence" or "capability" in AI. Critical Theory encourages us to question the objectivity of these metrics. What do these "tests" actually measure? What values or assumptions are embedded within the evaluation categories themselves?

- The illusion of objectivity: The seemingly precise percentages invite a perception of objective comparison. However, the design of MMLU, Knowledge, Reasoning etc. inherently embodies certain cultural and cognitive biases. A high score on these tests doesn’t necessarily represent "true" intelligence; rather, it indicates proficiency in a set of skills valued by those who created the tests.

- Technological rationality: The emphasis on quantitative improvement (increasing percentages) reflects a “technological rationality” - a belief that problems can be solved through technical means, and that progress is inherently good if it can be measured numerically. This image, in a way, promotes that rationality, reinforcing the idea that "more" (inference budget) always leads to "better" (higher score).

- The Black Box: The internal workings of these models are often opaque ("black boxes"). We see the outputs (percentages), but not the processes by which they are generated. This opacity makes it difficult to critically assess the validity or fairness of the results. We are invited to trust the numbers without understanding their origins.

Postmodernism

The image lends itself well to a postmodern reading because it highlights the constructed nature of "performance" and "truth" in the context of AI.

- Simulation and Hyperreality: These graphs represent a simulation of intelligence, not intelligence itself. The scores are representations of performance on specific tasks, abstracted from the complex reality of cognition. This creates a “hyperreality” – a simulation that becomes more real than the real. We begin to evaluate AI models based on their scores in these simulations, rather than their ability to solve real-world problems.

- Deconstruction of Benchmarks: Postmodernism encourages us to “deconstruct” the categories used to evaluate AI. What is "Knowledge"? What does it mean to "Reason"? These concepts are not fixed or universal. They are fluid and culturally contingent. The image implicitly asks us to question the validity of these benchmarks as measures of genuine intelligence or competence.

- The Power of Representation: The graphs themselves are representations of data. The choice of axis, scale, and color all influence how we interpret the results. This highlights the role of representation in shaping our understanding of AI. The image is not simply a neutral presentation of facts; it is an act of construction.

Marxist Conflict Theory

A Marxist reading, while less direct, can be applied. The race to improve these models and the associated computational demands represent an allocation of resources within a capitalist system.

- Concentration of Power: The increasing demand for computational resources (as shown by the escalating inference budgets) benefits those with access to capital. Companies and institutions that can afford to invest in more powerful hardware gain a competitive advantage, leading to a concentration of power.

- Commodification of Intelligence: The ability to create and deploy high-performing AI models is becoming a valuable commodity. This commodification of intelligence can exacerbate existing inequalities and create new forms of exploitation.

- The Labor of Data: The creation of these models relies on vast amounts of data, often collected through the unpaid or underpaid labor of others. The image does not reveal this hidden labor, obscuring the social costs of AI development.

I have refrained from using the Foucauldian, Queer Feminist Intersectional analyses as they do not appear to be applicable to the dataset. Let me know if you would like me to expand on any of these interpretations or explore other analytical frameworks.

tesseract-ocr

70 Mixtral 8x7B Mixtral 8x7B Mixtral 8x7B 355 3/0 — so & S601 Mistral 7B o 268| Mistral 7B 2 3 cS = = 50 9 = 3 ; % 66 50 § Mistyél 7B w 45 64 7 13 34 70 7 13 34 70 7 13 34 70 = 50 Mixtral 8x7B 50 Mixtral 8x7B x 66 - Mixtral 8x7B 40 364 S $40 c ; = be Mistral 7B 2 Mistray7B 5 30)" Mistral 78 s giz = 8 30 E 20 6 60 —=- Mistral 10 20 —e LLaMA2 7 13 34 70 7 13 34 70 7 13 34 70 Inference budget Inference budget Inference budget

simple-description (llama3.2-vision)

The meme is an image of a graph with several lines, each representing a different AI model's performance on a task. The x-axis is labeled "Inference Budget" and the y-axis is labeled "Accuracy". The text on the image reads "The AI models are getting better at doing less work" and "We are still waiting for the AI that can do nothing".