First Seen

2024-04-19T08:06:05.123499+00:00

simple-description (llama3.2-vision_11b)

The meme is an image of a graph showing the performance of various AI models on the benchmark dataset, with the x-axis representing the number of parameters and the y-axis representing the performance. The graph shows that many of the models have a high number of parameters, but their performance is not as good as expected. The text "AI is not a one-size-fits-all solution" appears in the image, suggesting that the models are not as effective as they could be.

detailed-analysis (gemma3-27b-vision)

Okay, let's break down this graph of "MMLU Average %" versus "Number of Parameters" with a multifaceted analysis, addressing your requested theoretical lenses. I'll structure this into sections, only including those theoretical frameworks that have genuine relevance to the image and data. Given the nature of the graph, some lenses will be more compelling than others.

I. Visual Description

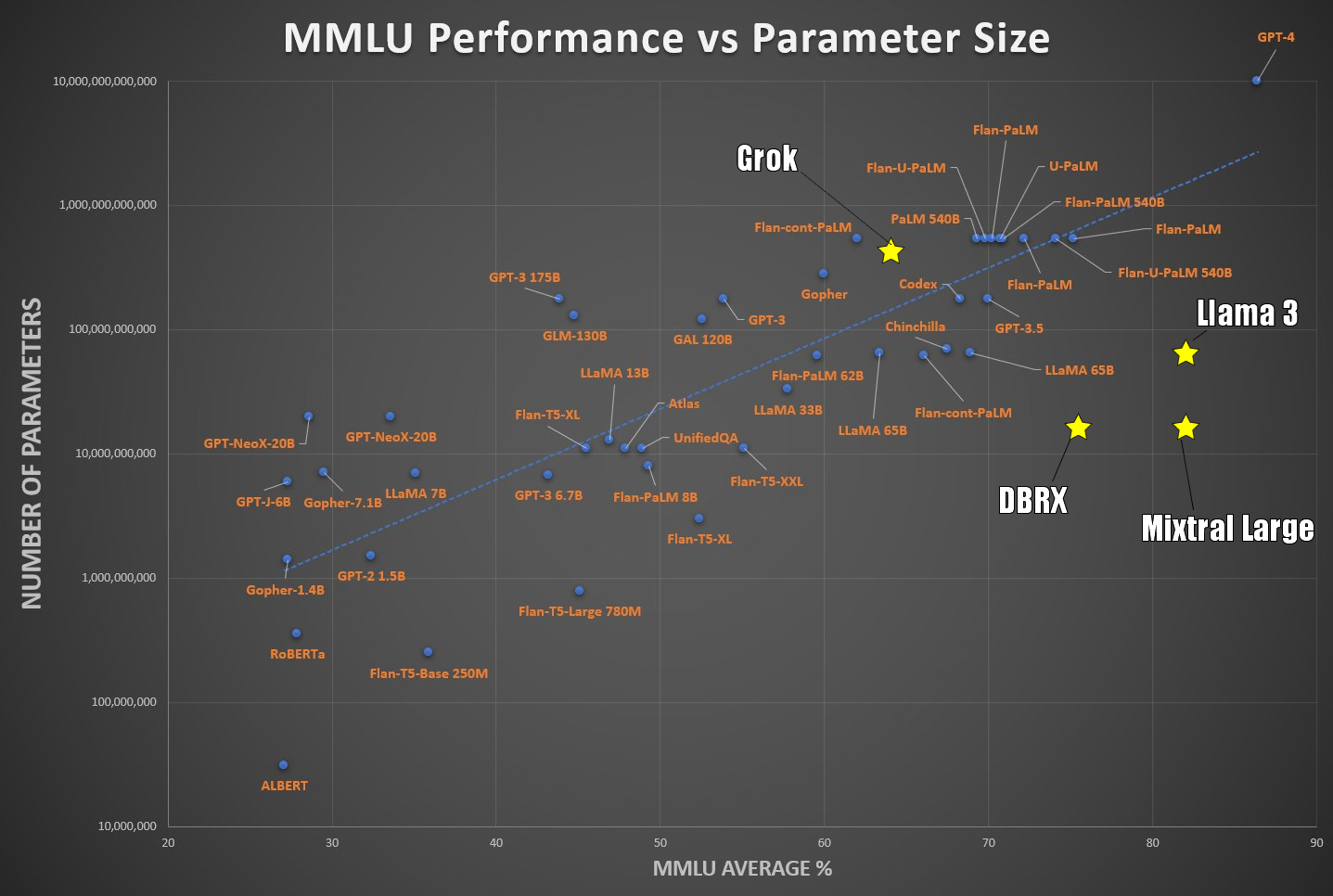

The image is a scatter plot displayed on a logarithmic scale, charting the relationship between the size of a language model (measured by its number of parameters on the Y-axis, ranging from 10,000 to 10 billion) and its performance on the MMLU (Massive Multitask Language Understanding) benchmark (measured in percentage on the X-axis, ranging from 20 to 90).

- Axes: The Y-axis is logarithmic, reflecting the vast range of model sizes. The X-axis is linear, representing the MMLU performance score.

- Data Points: Multiple data points are plotted, each representing a different language model. Each data point is a star.

- Trend: A clear positive correlation exists: generally, as the number of parameters increases, so does the MMLU performance. However, the relationship isn’t strictly linear. There’s diminishing return (the increase in MMLU score slows down for very large models).

- Clustering: There appears to be some clustering of models at certain performance and size levels, indicating common architectural choices or training methods.

- Notable Models: Models like GPT-4, Llama 3, and Mixtral are highlighted, potentially signifying leading-edge performance at a specific parameter size. The inclusion of model names is important; this isn’t just abstract data.

II. Critical Theory

This graph is ripe for application of Critical Theory, specifically the Frankfurt School’s concern with instrumental reason and the colonization of life.

- Quantification & Optimization: The graph itself is a manifestation of the drive to quantify cognitive ability (via MMLU) and optimize it through technological means (increasing parameters). The MMLU score, as a single number, attempts to capture the complexity of “understanding.” This is a key aspect of instrumental reason – reducing complex phenomena to measurable units for control and maximization.

- Technological Determinism: The positive correlation between parameters and performance might be interpreted as supporting a technological determinist view: that increasing computational power automatically leads to increased intelligence. However, Critical Theory would caution against this simplistic view. The graph doesn’t tell us how these models are trained, the data they are trained on, or the biases they might encode.

- The Illusion of Progress: The ascending trend appears to show relentless progress toward “better” AI. Critical Theory would ask: better for whom? And at what cost? The focus on MMLU (a specific type of assessment) might obscure other, equally important aspects of intelligence and knowledge. Is "better" only defined by performance on a standardized test?

- Commodification of Intelligence: The constant drive to create "bigger and better" models can be linked to the commodification of intelligence. These models are developed with potential profit in mind, and the focus is on improving their performance as products rather than contributing to broader human understanding.

III. Marxist Conflict Theory

While not as immediately apparent as with the other lenses, we can apply a Marxist perspective by focusing on resources and power dynamics.

- Resource Inequality: Building and training these massive language models requires enormous computational resources (energy, hardware, data). This creates a significant barrier to entry, concentrating power in the hands of a few large corporations and research institutions.

- Capital Accumulation: The development of these models is driven by the potential for capital accumulation. They’re seen as valuable assets that can be used to create new products and services, generating profit.

- The Digital Divide: While the graph doesn’t explicitly show the digital divide, the development and deployment of these models can exacerbate it. Access to the benefits of AI may be unevenly distributed, further marginalizing those who lack the resources to participate.

- Labor Exploitation: The creation of training datasets often relies on low-paid workers (data annotation, content moderation). This is a form of labor exploitation that is hidden within the technological infrastructure.

IV. Postmodernism

Postmodern analysis would focus on the instability of meaning and the constructed nature of "intelligence" and "understanding."

- Deconstruction of "Intelligence": The graph attempts to quantify "intelligence." A Postmodern perspective would question the very notion of a fixed, objective definition of intelligence. What does "understanding" mean? Is it simply the ability to perform well on a benchmark test?

- The Simulacrum: The models themselves can be seen as simulacra: representations that have become detached from any underlying reality. They can generate text that appears intelligent, but it's based on patterns and correlations in data, not on genuine understanding.

- The Death of the Author: The models can generate text in various styles, effectively “authoring” content without any intentionality or consciousness. This challenges the traditional notion of authorship.

- Relativism of Truth: The MMLU score is presented as an objective measure of performance, but a Postmodern perspective would emphasize the relativity of truth. The score is only meaningful within a specific framework (the MMLU benchmark), and different benchmarks might yield different results.

In conclusion:

This seemingly simple graph is a rich site for critical analysis. It raises questions about the nature of intelligence, the power dynamics that shape technological development, and the potential social consequences of AI. By applying these theoretical lenses, we can move beyond a purely technical understanding of the data and gain a deeper appreciation of the complex issues at stake.

tesseract-ocr

ROR eta col gar ite Deen 4 oe ston octon ki) as rT Grok CUS aU I Vaud ee oe sPantuntonnond iN ! by am Pens i Flan-cont-FalM, e ZC) eZ ; ae ena Ce) e as SS eee Vey Cy =< a eats Cae ony n ee ~ ces ~ oS = Llama 3 to ean 7 woe * Ss Tre Wel} ees — LlaMA 658 } a ~ } = r) 5 Cees i Ai ee LlaMA 338 ed A « = Btyoateetend ane aa >< Oy Aran LN ELE [o) By. ot Cees Pa Ce ee: a idea LE D I: RX ae e =, Fy ee coo We PED an) 2 Roomun yun) re GPT-21.5B Cee) 5 = eee e TL O Tsay e Pare ra Frode ° en Fron) Py Ey 40 50 ca 70 Ey Ey ROY St YAN (4

simple-description (llama3.2-vision)

This meme is a chart comparing the performance of various machine learning models, with the x-axis representing the model's size (in parameters) and the y-axis representing its performance. The chart shows that smaller models (like BERT) have poor performance, while larger models (like GPT-4) have better performance. The text "BERT is not the best" is written above the chart, implying that the model's performance is not the best.